Varigence Blog

Dev Diary - Embracing the new Azure Data Factory Script Activity

Earlier this month, the Azure Data Factory (ADF) team at Microsoft release a new way to execute SQL statements in a pipeline: the Script Activity.

The Script Activity allows you to execute common SQL statements to modify structures (DDL) and store, retrieve, delete or otherwise modify data (DML). For example, you can call Stored Procedures, run INSERT statements or perform lookups using queries and capture the result. It complements existing ADF functionality such as the Lookup and Stored Procedure pipeline activities.

In some cases though, the Lookup and Stored Procedure activities have limitations that the new Script Activity does not have. Most importantly, the Script Activity also supports Snowflake and Oracle.

This means that additional customizations to call Stored Procedures on Snowflake (and lookups, to an extent) are no longer necessary. You can simply use the Script Activity.

Varigence has been quick to adopt this new feature, which will be available in the upcoming 2022 R2 release (available early April). As part of 2022 R2, the Script Activity is supported in BimlScript, and the BimlFlex patterns have been updated to take advantage of this new ADF functionality.

A major benefit is that a single approach can be used for all lookup and procedure calls. And, specifically for Snowflake targets, it is no longer necessary to use the Azure Function Bridge that is provided by BimlFlex to run procedures and queries on Snowflake. Even though the Azure Function Bridge has been effective -and is still in use for certain features that ADF does not yet support directly - we prefer to use native ADF functionality whenever possible because this offers the most flexibility and ease of maintenance for our users.

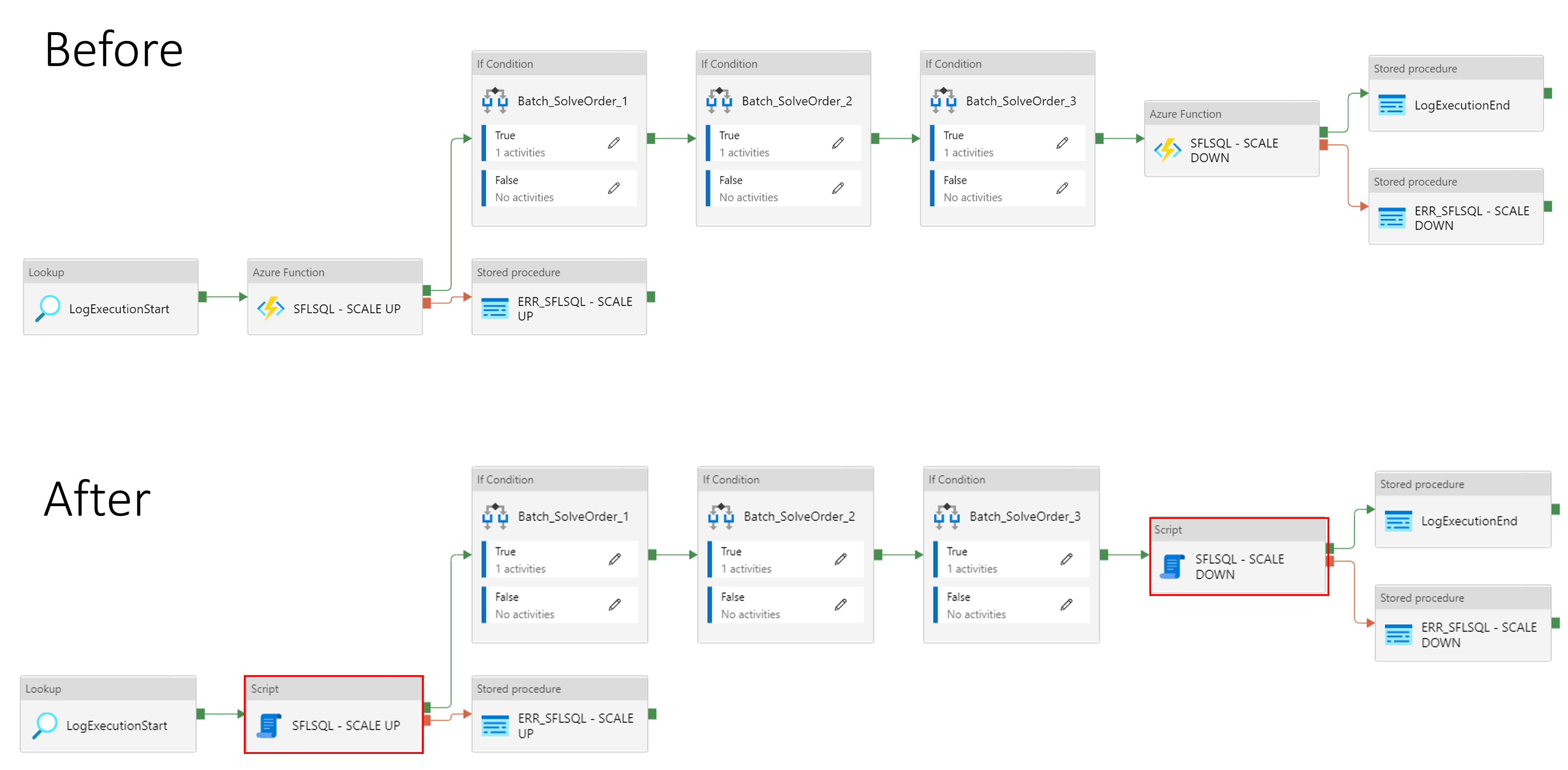

Below is an example of the changes in the patterns that you can expect to see in the upcoming 2022 R2 release. In this example, the Azure Function call has been replaced by a direct script call to Snowflake.

Dev Diary - Pushing down data extraction logic to the operational system environment

In some scenarios, when a 'source' operational system shares the technical environment with the data solution, it can be efficient to make sure all processing occurs on this environment. This way, the data does not have to 'leave' the environment to be processed.

In most cases, operational systems are hosted on different environments than the one(s) that host the data solution, which means that data first needs to be brought into the data solution for further processing.

If, however, the environment of an operational system is the same as that of the data solution, this initial 'landing' or 'staging' step does not require the data to be processed via an external route. In other words, it becomes possible to 'push down' data staging logic to this shared environment. This way, the delta detection and integration of the resulting data delta into the data solution all occurs in the environment, and not by using separate data transformations in for example SQL Server integration Services (SSIS) or Azure Data Factory (ADF).

Pushdown is sometimes referred to as 'ELT', (Extract, Load and then Transform) as opposed to the 'ETL' (Extract, Transform and then Load) paradigm. While these terms may not always be clear or helpful, generally speaking ELT / pushdown means that most code is directly running on the native environment whereas ETL signals most code is running as part of a separate transformation engine not directly related to the environment.

Currently in BimlFlex, the source-to-staging processes that follow the ELT paradigm always use either a SSIS or an ADF Copy activity to move the data onto the data solution environment before the processing can start. In the scenario outlined above, when the operational system is hosted on the same environment as the data solution, this landing step can be skipped.

With the 2022 R2 release, it will now be possible to use the new Pushdown Extraction feature to deliver this outcome, and make sure the integration of data in the solution can be achieved without requiring the additional landing step or Copy Activity.

Enabling Pushdown Extraction at Project level will direct BimlFlex to generate Stored Procedures that replace the initial landing of data using SSIS and/or ADF.